“The saddest aspect of life right now is that science gathers knowledge faster than society gathers wisdom.”

- Isaac Asimov

In Fear and Trembling, Søren Kierkegaard’s pseudonymous author Johannes de Silentio admonished men who wanted to “go further” than faith, because they failed to realise that faith was a task for a lifetime. These rash individuals, whom he disparagingly called “assistant professors”, didn’t understand the first thing about faith if they planned to go beyond it. Today, assistant professors and Silicon Valley techies promise that one day we’ll surpass humanity by becoming transhuman. When we merge with super-intelligent AI, we’ll finally conquer our limitations, eradicate our suffering, and vanquish death. Oxford philosopher Nick Bostrom even argues that when we are enhanced by AI, we’ll be better equipped to promote the existential value of life. Transhuman 2.0s will be more effective than us measly human 1.0s at spreading the ideas of world-historical-individuals like Plato, Nietzsche, Martin Luther King, Jr., and presumably even Kierkegaard. As transhumans, Bostrom thinks we will do life better, that is, if we don’t kill ourselves first.

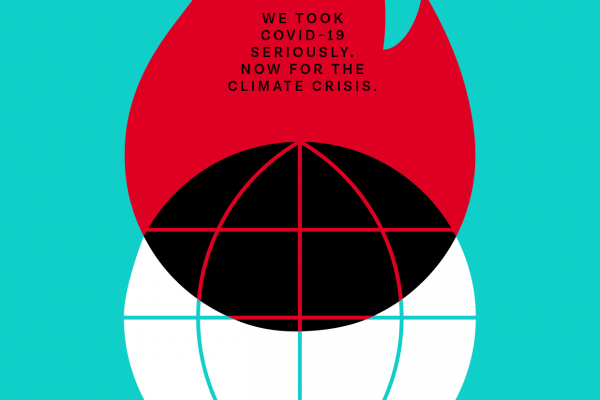

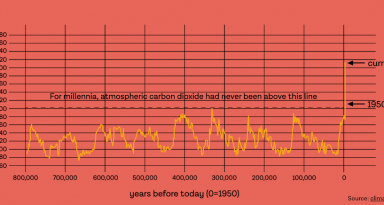

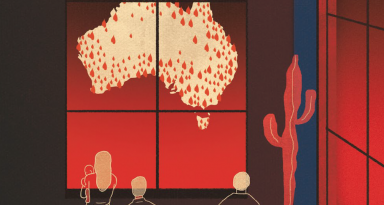

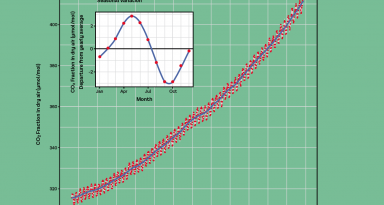

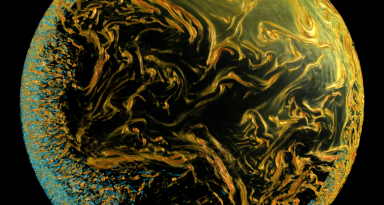

Before 1945, the only existential danger to humanity was comets, according to Existential Risk (X-risk) scholars. Shortly afterwards, we made the hydrogen bomb, raising the number of threats against humanity from one to two. 75 years later we face 23 X-risks, according to Bostrom, and most are our own doing. That’s a dangerously sharp increase in the number of threats not just to my life but yours. One man-made X-risk is climate change. Another is our robots going Oedipal on us. We seem to be speeding up our extinction, not reducing its likelihood.

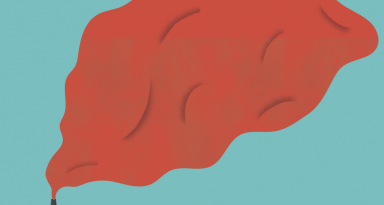

There is something that feels fated about the race to kill ourselves – I suspect that we’re engineering our doom, although perhaps behind our own backs. I’m not talking about Nick Land’s flavour of accelerationism, where we intentionally cut to the worst case-scenario to see what comes next. I mean something more idiotic and common, like when a factory directs their toxic drain-pipe to a nearby lake. We’ve been designing our end and calling it progress for decades now, until someone labelled it global warming.

The transhumanist fix for global warming – the problem of humans’ excessive control over nature – is more control over nature. Technological pessimist Phillip Verdoux thinks we are too deep in the problem to quit now. So, while he admits that we get ourselves into jams like these – global warming, vicious robots, and other X-risks – by doubling-down on technology, he thinks we can only save ourselves by turning to it: befriending it. The idea is that we’ll just make things worse if we start pushing hackers underground; at least above ground they can be regulated.

If the pessimist’s turn to technology surprises us, the techno-optimists’ enthusiasm for it shouldn’t. They believe we’re wasting the little time we have left to save ourselves before Boston sinks into the Atlantic. The problem for these transhumanists isn’t that we have manipulated nature; it’s that we haven’t done it enough. They propose that we aggressively fund technology and science to beat the Earth’s overheating. Technogaians constitute a contentious type of environmentalist capable of joining libertarians and democrats on the issue of clean tech. By encouraging AI, genetic engineering, and bio-hacking, they think we can reverse global warming, feed everyone, find cures to diseases, and possibly also live forever. Gennady Stolyarov, chairman of the Transhumanist party, made a list of technological solutions to combat climate change that includes self-driving cars and GMOs. He supports U.S. Republican 2020 presidential candidate Zoltan Istvan, who also believes that by funding tech we can beat the Earth’s collapse and, in the process, become superior versions of ourselves.

Didn’t social media in 2019 guarantee that the definition of insanity most often attributed (probably incorrectly) to Albert Einstein would forever be seared into our brains? If we recall that in the last 75 years we’ve aggressively multiplied our X-risks, and yet we insist on doing more of what got us here, then we’ve gone bananas. Hubris had already been discredited by the time it became a sin, and yet it nicely names why we keep trying to take ourselves out, only to throw up a hasty Hail Mary in the eleventh hour. It’s nicer to call it curiosity.

Instead of assistant professors, let’s imagine transhumanists as Pandoras, except that instead of the Gods ordering them not to open their box of ills, the warning comes from Luddites: a handful of philosophers and religious geezers who still believe in outdated concepts like hubris. Naturally, the transhumanists wave them off, sign on to the project of vanquishing death, and get to work bio- and nano-hacking their way to immortality. In contrast to the Homeric and Biblical literature that paints curiosity as a woman, these Pandoras are overwhelmingly male, and many come from Silicon Valley. Curious men striving to overcome limitation is not a new story, and not even a new deadly story, when you consider colonialism’s obsession with discovery and expansion. But in addition to killing or enslaving those people over there and greedily extracting from the planet its shiniest and tastiest treats, now we’re cannibalising ourselves. Perhaps Pandora wasn’t propelled by curiosity (or hubris) after all, but by self-loathing.

Instead of assistant professors, let’s imagine transhumanists as Pandoras, except that instead of the Gods ordering them not to open their box of ills, the warning comes from Luddites: a handful of philosophers and religious geezers who still believe in outdated concepts like hubris. Naturally, the transhumanists wave them off, sign on to the project of vanquishing death, and get to work bio- and nano-hacking their way to immortality. In contrast to the Homeric and Biblical literature that paints curiosity as a woman, these Pandoras are overwhelmingly male, and many come from Silicon Valley. Curious men striving to overcome limitation is not a new story, and not even a new deadly story, when you consider colonialism’s obsession with discovery and expansion. But in addition to killing or enslaving those people over there and greedily extracting from the planet its shiniest and tastiest treats, now we’re cannibalising ourselves. Perhaps Pandora wasn’t propelled by curiosity (or hubris) after all, but by self-loathing.

Nietzsche accused the priestly class of promoting weakness and shaming strength, in short, of hating humanity. The Ray Kurzweils of transhumanism also seem to hate humanity (though they sometimes lean on Nietzsche’s Superman for credibility) by targeting for removal everything that makes us us, what tech critic and lay philosopher Evgeny Morozov calls features of humanity, not bugs. My list captures our least photogenic – but all too human – side: pain, suffering, sadness, inefficiency, mistakes, aporias, frailty, hesitation, doubt, confusion, fragility, and of course, death. When we vanquish death we’ll no longer fear it, the logic goes, and ditto for suffering and limitation. But death, suffering, and limitation are three of the most existentially recognisable features of humanity, not to mention fear, angst, care, sadness, loneliness, despair, and grief. Trying to eradicate these “weaknesses” sounds like toxic masculinity’s been given security clearance and a parking spot. When we eliminate our unsightly existential features, what of us will remain?

In 1850, Arthur Schopenhauer wrote that humans would wage war on each other out of boredom if suffering were eradicated. Perhaps boredom best explains the increase in X-risks; that, or an old-fashioned fear of death. Martin Heidegger located the uniqueness of human existence in its ability to care about itself and worry about its own extinction. Almost everything we do counts for him as “fleeing from death,” which makes the transhumanists’ flight from death two-fold and ironic: they’ll hasten death out of disguised terror.

23 X-risks cause us more anxiety than one did, so if Kierkegaard was right that anxiety makes us human, then we’re headed into a still more human future, not a transhuman one. And we’ll never get further than humanity as long as we bungle humanity as badly as Kierkegaard’s assistant professors bungled faith. We need a new existentialism to help us see that a human being with no existential features simply isn’t one. Just as Kierkegaard’s assistant professors were surprised that they couldn’t get beyond faith, I predict that transhumanists will be surprised that they can’t get beyond being human without losing their humanity – that Bostrom’s idea of broadcasting the juiciest bits of humanity out of a Turing-approved voice box will fail to catch. In the end, there is no transhuman; there’s only human life and human death, which, like faith, is a task for a lifetime.

< Our library | 13 questions >